With the rapid advancement of generative Artificial Intelligence (AI) in information retrieval, voice assistance, and human–machine interaction, integrating intelligent voice perception seamlessly into everyday clothing to create “hearing and responding” smart garments has become an important direction for wearable technology. However, conventional acoustic sensors are bulky and rigid, incompatible with soft textiles, and often deliver weak signals with narrow frequency bandwidths and discrete responses, making them unsuitable for high-efficiency voice recognition powered by deep learning models.

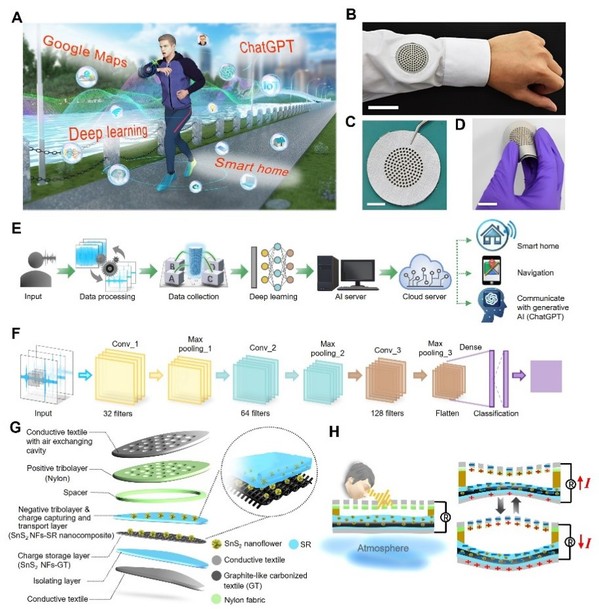

To address these challenges, Prof.Baoquan Sun’s team at the Institute of Functional Nano & Soft Materials (FUNSOM), Soochow University, together with collaborators, has developed a deep learning–empowered acoustic textile (A-Textile). Based on the principle of triboelectric electrostatic generation and a multilayer composite architecture, the A-Textile enables self-powered, fabric-level voice perception and direct interaction with generative AI (ChatGPT), providing a new pathway for intelligent clothing. By incorporating three-dimensional SnS₂ nanoflower structures into silicone rubber to enhance charge capture and transport, and introducing a carbonized-fabric/SnS₂ charge-storage layer, the system achieves high-sensitivity, cavity-free acoustic vibration detection. The A-Textile delivers an output voltage of 21 V, a sensitivity of 1.2 V Pa⁻1, a frequency resolution of 1 Hz, and a signal-to-noise ratio of 59.3 dB, covering the 80–900 Hz speech range while maintaining stable performance under repeated cycling, humidity, and bending. By integrating a two-dimensional convolutional neural network (2D CNN), the system can accurately recognize voice commands, achieving 93.5% and 97.5% recognition accuracies in smart-home control and voice navigation applications, respectively. Moreover, it enables real-time voice-to-AI interaction with ChatGPT for spoken queries and task execution, demonstrating the potential of wearable generative-AI terminals. This work realizes a deep fusion of acoustic sensing materials, flexible electronics, and artificial intelligence algorithms, offering a new approach for intelligent textiles and human–machine interactive systems. The study was published in Science Advances (DOI: 10.1126/sciadv.adx3348). Dr. Beibei Shao, Associate Research Fellow at FUNSOM, is the first author of this paper.

Link to paper:https://www.science.org/doi/10.1126/sciadv.adx3348

Title: Deep learning-empowered triboelectric acoustic textile for voice perception and intuitive generative AI-voice access on clothing

Author:Beibei Shao, Tai-Chen Wu, Zhi-Xian Yan, Tien-Yu Ko, Wei-Chen Peng, Dun-Jie Jhan, Yu-Hsiang Chang, Jiun-Wei Fong, Ming-Han Lu, Wei-Chun Yang, Jiann-Yeu Chen, Ming-Yen Lu, Baoquan Sun*, Heng-Jui Liu*, Ruiyuan Liu*, Ying-Chih Lai*

Editor: Guo Jia